Architectural Primitives: Inter-Datacenter Tunnels

At TempoDB, we maintain multiple environments (production, staging, etc), and each environment is in a datacenter (Dallas, Seattle, etc). For the most part, we want strict separation between environments, but we have a growing list of traffic that ought to be allowed to flow between them (see below). We designed a new architectural primitive which allows us to securely permit some traffic, while still blocking everything else.

Business Objectives Right now, each environment (production/staging) is in one datacenter (Dallas/Seattle). What we developed allows us to connect two datacenters, which means that one environment can span multiple datacenters (intra-environment tunnel) or that two datacenters from different environments can be connected (inter-environment tunnel; eg a connection between production and staging). This allows features such as:

- The ability to set up streaming replication (master/slave) from production to staging for both HBase and postgres

- The ability to easily load a complete backup of production into staging, for developer load-testing (made much easier by streaming replication)

- The ability to expand into other data centers on the SoftLayer internet backbone, in order to expose international Points of Presence (POPs). These POPs wouldn’t be full environments, but lightweight proxies that move the SSL handshake closer to the customer, and then rely on faster (but still encrypted) communication within our system

Such a tunnel must satisfy a few expectations:

- It must provide transparent encryption to all traffic going through it

- It must have a firewall on both ends, and disallow everything except explicitly allowed traffic

- It must be highly available

OpenVPN & Vyatta There are a number of tunnelling options available for linux, and we went with OpenVPN because it suits our needs (everything is IP, authentication makes sense for us), it’s flexible (supports site-to-site, and remote access, which we’ll want as well), and it’s fairly standard. We also choose to use a Vyatta rather than mix together everything we need on a standard linux box. The reason for this is complexity management; Vyatta is a linux system with a bunch of networking/routing tools that are designed to work well together, including OpenVPN, VRRP (Virtual Router Redundancy Protocol, for high availability), and stateful firewalls.

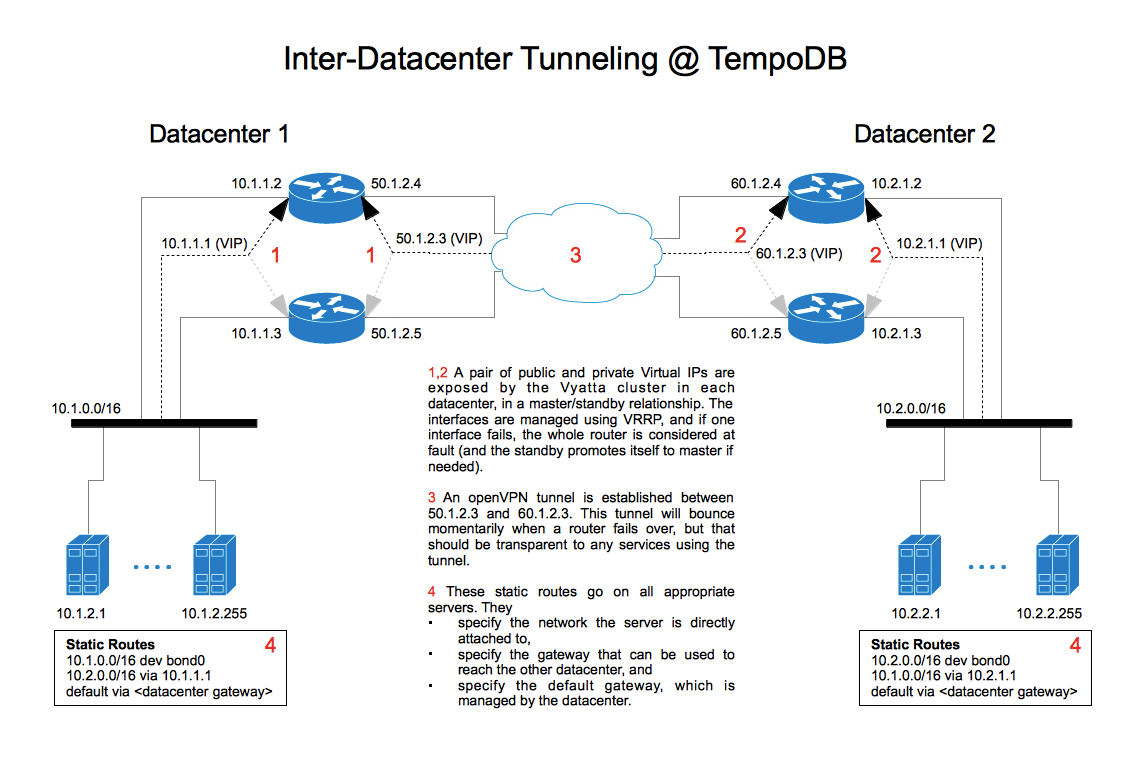

Solution Overview Below, you can see the diagram of where we ended up. Each datacenter has a pair of vyatta servers, and each pair exposes a public and a private IP (via a VIP). An encrypted openvpn tunnel is maintained between the two public vips, and it can fail-over automatically if a box goes down. There is a firewall on both ends of the pipe which specifies a short list of allowed traffic types.

Local Routing Having the tunnel only allows the Vyatta servers to talk across the pipe. In order for other servers to make use of the connection, they have to have static routes locally which use the vyattas as a gateway for traffic bound for the other datacenter (see the above image for an example). In other words, most servers don’t know anything about the tunnel. They just know that vyatta can send data to the other datacenter. The default gateway for each server is controlled by our datacenter, otherwise we could have just put the routes there (instead of propagating them to every box). Alternatively, we could set up our vyatta cluster as the default gateway, which we may do at some point.

Static vs Dynamic Routing For now, we’re using static routing, which means there is a single IP that servers send all traffic through on each end of the tunnel . Another option (which we may go to eventually) would be to use a dynamic routing protocol (probably OSPF). This would allow us balance our bandwidth across multiple out-bound routes, instead of a single master. Our datacenter handles high availability of our public network access, but if we had direct connections to multiple uplinks, a dynamic routing protocol such as OSPF would allow us to seamlessly use both.

High Availability We use VRRP for high availability of Vyatta. The way it works is that a pair of Vyatta servers share a public and a private Virtual IP (VIP). The VIPs float (as a pair) between the two servers as appropriate (if one fails, both VIPs move, and the new master re-establishes the connection).

Arity The setup we’ve discussed and diagrammed is for connecting two datacenters, but it is likely that we’ll want multiple connections to a single datacenter. Conveniently, this doesn’t require much extra work. Using the existing router pair and the existing VIP pair, it’s just a matter of adding an extra OpenVPN config entry onto the routers. Using this strategy, there is little extra effort to go from 1 to N connections.

What’s Next? For us, an inter-datacenter tunnel is an architectural primitive which we can use in other projects. In later posts, we’ll talk about how TempoDB uses this to expand our production environment into multiple data centers (Points of Presence), as well as set up a channel between environments (streaming replication between production and staging environments).